The history of website traffic is really the history of the internet itself. By charting how traffic changed, we can understand how the internet evolved into its modern form and what’s behind the waves of exponential growth.

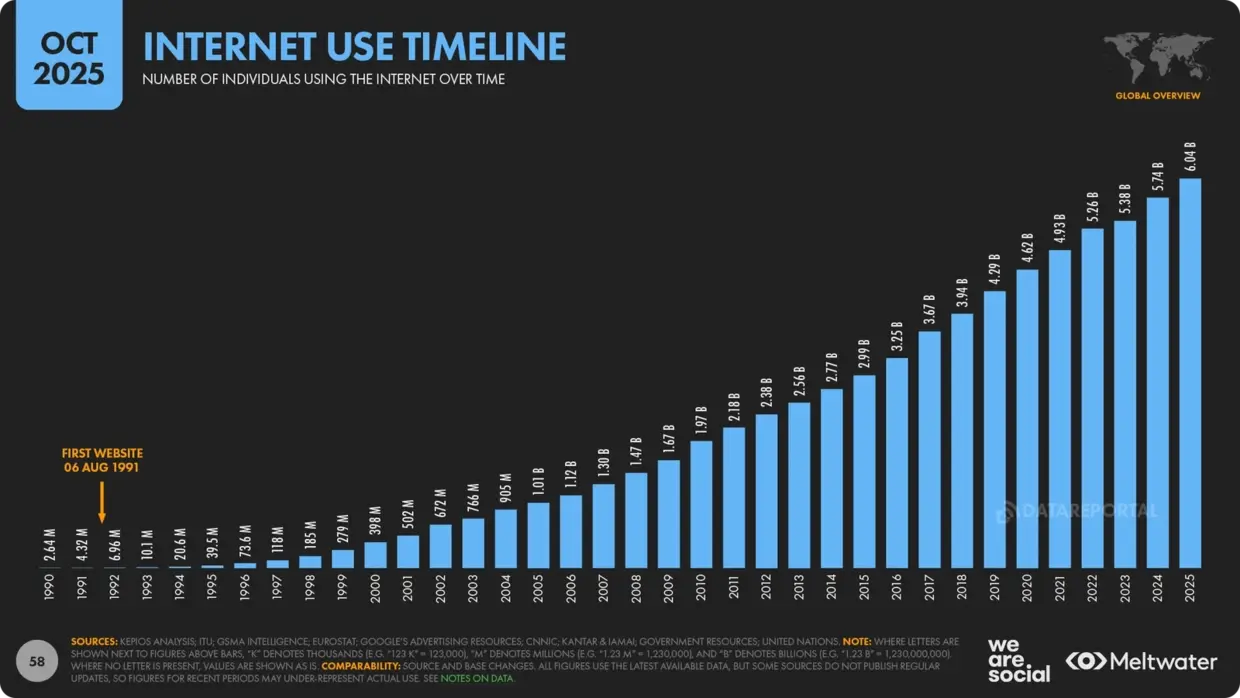

This thing that we all take for granted, checking multiple times a day, is really just a collection of documents and folders- around 1.34 billion of them- that we collectively access. Today, the internet’s estimated 6 billion users produce 33 exabytes (that’s 33 billion terabytes) of traffic every single day.

That’s about 4.4 GB per user, per day- numbers that would have shocked the early pioneers of cyberspace.

These numbers continue to grow every second, so understanding what drives them is important for anyone who lives in the digital age.

This thing that we all take for granted, checking multiple times a day, is really just a collection of documents and folders- around 1.34 billion of them- that we collectively access. Today, the internet’s estimated 6 billion users produce 33 exabytes (that’s 33 billion terabytes) of traffic every single day.

That’s about 4.4 GB per user, per day- numbers that would have shocked the early pioneers of cyberspace.

These numbers continue to grow every second, so understanding what drives them is important for anyone who lives in the digital age.

The Early Internet: 1960s-1980s

Total Traffic: Negligible- a few universities, government departments and researchers. Starting at 4 connected nodes. By 1990 around 1TB of data was being shared each month.

.webp)

The earliest version of the internet wasn’t referred to as the internet at all- it was the ARPANET. Invented in 1969 by the US Department of Defence’s Advanced Research Projects Agency (ARPA), it connected universities and government contractors, allowing them to share computing resources and information remotely.

Using packet switching (the basic technology behind modern website traffic), ARPANET provided a secure, reliable method of communication between these organisations during the height of the Cold War. At its height, there were 30 institutions from Hawaii to Europe sharing research information via this system.

Researchers and staff from these 30 institutions formed the entirety of the traffic on this early internet precursor, so numbers were very low. There were no websites, so what little traffic there was was in the form of early emails and file transfers.

Rather than browsing, information was sent via File Transfer Protocol (FTP) and Telnet. Even when more modern versions of these protocols were adopted in 1983, it wouldn’t really be fair to call it “web traffic”, though it certainly was traffic.

Growth was slow, limited to governments, universities, and researchers, and in 1990, ARPANET was finally shut down.

Rather than browsing, information was sent via File Transfer Protocol (FTP) and Telnet. Even when more modern versions of these protocols were adopted in 1983, it wouldn’t really be fair to call it “web traffic”, though it certainly was traffic.

Growth was slow, limited to governments, universities, and researchers, and in 1990, ARPANET was finally shut down.

The Very First Bots

This period also saw the very first internet bots, providing basic services to users in early chat apps and stopping servers from timing out due to a lack of human activity.While theorists like John van Nueman suggested that these self-exicuting scripts could be used for sinister purposes as early as the 1940s- before the ARPANET was even conceived of- it wasn't until 1970 that the theory was tested.

The Creeper worm was developed by Bob Thomas as a proof-of-concept that showed that self-replicating programs were possible. It left the message “I’m the creeper: catch me if you can” but otherwise caused no damage.

With such small numbers of people using the system, this represented a reasonably large proportion of total traffic until E-mail was invented the following year, and quickly rose to prominence, accounting for around 75% of total traffic.

The Creeper worm was developed by Bob Thomas as a proof-of-concept that showed that self-replicating programs were possible. It left the message “I’m the creeper: catch me if you can” but otherwise caused no damage.

With such small numbers of people using the system, this represented a reasonably large proportion of total traffic until E-mail was invented the following year, and quickly rose to prominence, accounting for around 75% of total traffic.

The Birth of the Web and Website Traffic: 1989-1995

Total Traffic: 1989- Estimates claim around 1 Million total users. 1995: Estimates vary between 16 and 44 Million Users.

Tim Berners-Lee is generally credited with creating the internet as we know it today- the World Wide Web. In 1989, while working at CERN, Berners-Lee created the first ever website, a static, text-based page explaining the World Wide Web project. In 2013, this proto-site was restored and is once again live as a historical document.

On this early site, you can see the bones of the sites that we all browse today; hyperlinks connect various pages, directing traffic. Though basic to modern eyes, this is the birth of the internet and of web traffic as we know it.

On this early site, you can see the bones of the sites that we all browse today; hyperlinks connect various pages, directing traffic. Though basic to modern eyes, this is the birth of the internet and of web traffic as we know it.

The Birth of Browsing

Now that websites existed, there had to be some way of accessing them. Berners-Lee and other computer scientists involved in the early web created browsers of their own, but they were technical and required a good level of knowledge to use. The very first of these was called Nexus and could only be run on the NeXT computer.

In 1993, Marc Andreessen and Eric Bina released the first graphical browser, the direct ancestor of the program you’re using to read this blog. Mosaic, as the browser was called, built on the previous applications, making them much more user-frie

These early browsers included staples like icons for navigation buttons and the ability to display more attractive fonts that we’d recognise today.

Using these new accessible, user-friendly browsers that didn’t require much in the way of technical knowledge, the number of websites quickly started to grow, and with them, the amount of traffic.

In 1993, Marc Andreessen and Eric Bina released the first graphical browser, the direct ancestor of the program you’re using to read this blog. Mosaic, as the browser was called, built on the previous applications, making them much more user-frie

These early browsers included staples like icons for navigation buttons and the ability to display more attractive fonts that we’d recognise today.

Using these new accessible, user-friendly browsers that didn’t require much in the way of technical knowledge, the number of websites quickly started to grow, and with them, the amount of traffic.

Early Adopters

Now that the internet was accessible to those who didn’t have a degree in computer science, businesses and hobbyists started to build their own sites. In 1994, Microsoft went online, offering contact information and technical FAQs for its products. That year also saw the birth of Amazon, selling books.

This is the real genesis of web traffic as we think of it today. Websites might have been basic and clunky to our more acclimated eyes, but they were absolutely revolutionary. Rather than a geeky hobby or a few researchers sharing information, the internet was now a commercial service.

With access to businesses and information, traffic grew and grew through the early 90s. As each new site came online, it created a snowball effect- with more things to browse, more and more traffic was being created.

This is the real genesis of web traffic as we think of it today. Websites might have been basic and clunky to our more acclimated eyes, but they were absolutely revolutionary. Rather than a geeky hobby or a few researchers sharing information, the internet was now a commercial service.

With access to businesses and information, traffic grew and grew through the early 90s. As each new site came online, it created a snowball effect- with more things to browse, more and more traffic was being created.

The First Search Engines

With the rapid expansion of the World Wide Web, users needed some way of navigating beyond memorizing URLs. The very first search engine was invented in 1990 and was known as Archie. This basic application indexed the file names and contents of FTP sites, allowing users an easier way of choosing what they accessed.

Various iterations of Archie-like search tools evolved, each offering new features, with notable examples being:

Various iterations of Archie-like search tools evolved, each offering new features, with notable examples being:

- ALIWEB- “Archie-like Indexing For The Web” allowed people to find early websites, launched in 1990

- Wandex- an early crawler-based (like modern search engines) tool, launched in 1993

- WebCrawler- the first search engine to fully crawl websites, allowing people to search for exact terms, launched in 1994

In 1995, something approaching what we would think of as ‘modern’ search engines appeared. AltaVista was the first to allow users to search using natural language and find multimedia results, birthing the idea of SEO and manipulating how traffic found your site.

Yahoo! also launched this year, though it provided a different service, offering a curated directory rather than modern crawler-based searches.

These engines made finding specific content easy, greatly increasing the flow of traffic and paving the way for the Dot Com Boom of the late 90s and early 2000s.

Yahoo! also launched this year, though it provided a different service, offering a curated directory rather than modern crawler-based searches.

These engines made finding specific content easy, greatly increasing the flow of traffic and paving the way for the Dot Com Boom of the late 90s and early 2000s.

Bots Unleashed

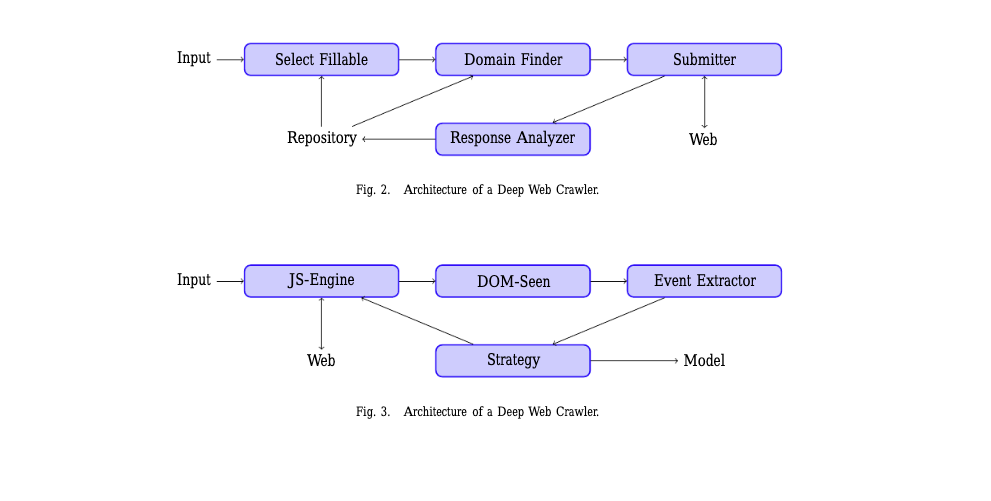

These new crawler-based search engines used bots to follow links and categorized content using algorithms. This allowed for much faster updating as the internet expanded and, eventually, allowed for searcher intent to be a major factor in deciding which pages were served up in results.

Directories, on the other hand, used human-driven categorisation to create intuitive links, ranking content by things like type and location, employing a human editorial team to evaluate submissions and rank them accordingly.

Wandex, WebCrawler and AoL all made use of this new bot technology, hugely reducing the amount of human labor needed to underpin search functions and starting to thoroughly map the emerging internet.

In these early days of bots being out in the wild, and before modern analytics, these would count as a “hit”, the same as if a person had visited the site, making it impossible to tell how much traffic was human and how much was generated by bots.

Directories, on the other hand, used human-driven categorisation to create intuitive links, ranking content by things like type and location, employing a human editorial team to evaluate submissions and rank them accordingly.

Wandex, WebCrawler and AoL all made use of this new bot technology, hugely reducing the amount of human labor needed to underpin search functions and starting to thoroughly map the emerging internet.

In these early days of bots being out in the wild, and before modern analytics, these would count as a “hit”, the same as if a person had visited the site, making it impossible to tell how much traffic was human and how much was generated by bots.

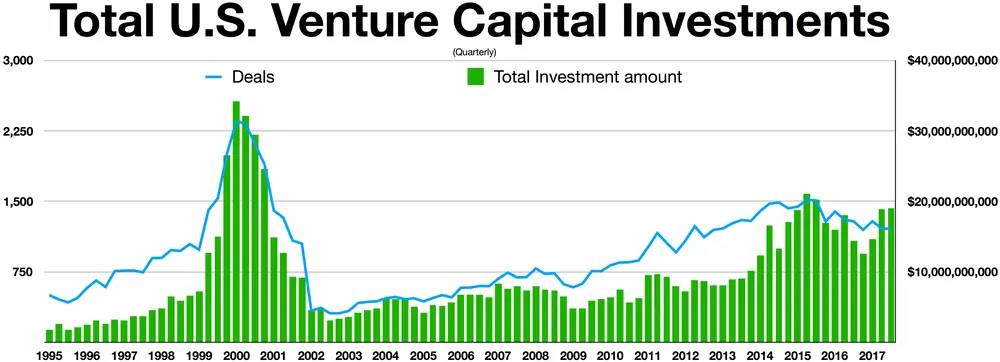

The Dot Com Boom: 1995-2002

Total Traffic: 1995: Estimates vary between 14 Million and 44 Million2002: Between 600 and 800 Million users - numbers were doubling each year.

As the internet grew and accessibility got even easier, more and more people brought routers and computers into their homes. What was the domain of geeky hobbyists and specialist researchers was quickly becoming a utility that could be compared to phones or even electricity.

The Dot Com boom saw businesses go online by the thousand, creating a huge wave of traffic. E-commerce, allowing people to shop from their own homes, became a huge economic force, pushing usage statistics even higher. As traffic surged, the need for businesses to be online grew too, creating even more traffic as online presence became a vital part of business plans.

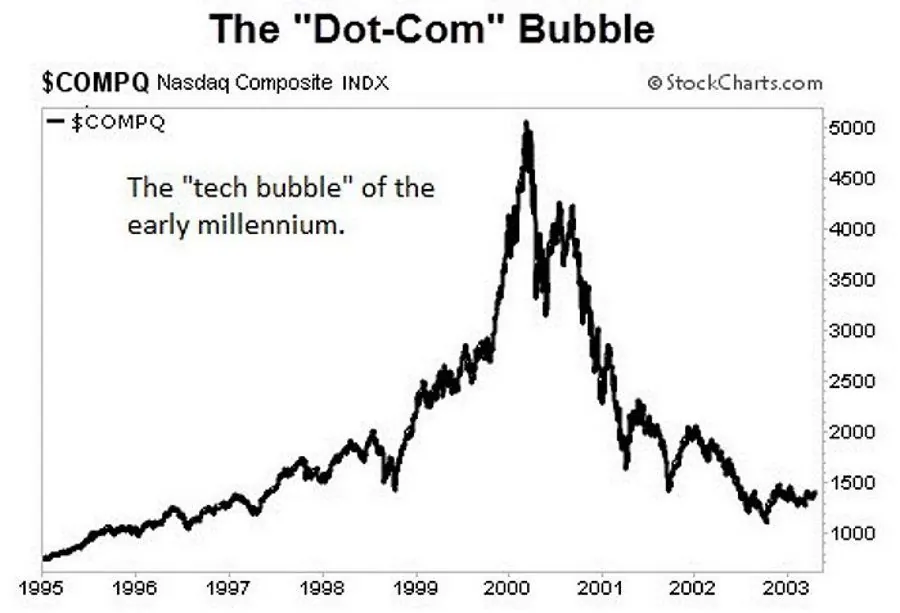

New dot-com startups triggered a huge rise in the stock market, comparable to those seen with the invention of the railroad in the 1840s or the radio in the 1920s. Based on the promise of profitability rather than actual value, the stock of tech companies exploded. Between 1995 and 2000, the NASDAQ Index rose from under 1000 to more than 5000.

This frenzy of activity created a cultural buzz alongside the economic one, bringing even more users online and seeing traffic surging even higher. This saw the internet come into people’s homes for the first time as it evolved from a store of information to one that could provide tangible services- things like ordering products for delivery.

This represented a paradigm shift from a research-based internet towards a more commercialized one.

The Dot Com boom saw businesses go online by the thousand, creating a huge wave of traffic. E-commerce, allowing people to shop from their own homes, became a huge economic force, pushing usage statistics even higher. As traffic surged, the need for businesses to be online grew too, creating even more traffic as online presence became a vital part of business plans.

New dot-com startups triggered a huge rise in the stock market, comparable to those seen with the invention of the railroad in the 1840s or the radio in the 1920s. Based on the promise of profitability rather than actual value, the stock of tech companies exploded. Between 1995 and 2000, the NASDAQ Index rose from under 1000 to more than 5000.

This frenzy of activity created a cultural buzz alongside the economic one, bringing even more users online and seeing traffic surging even higher. This saw the internet come into people’s homes for the first time as it evolved from a store of information to one that could provide tangible services- things like ordering products for delivery.

This represented a paradigm shift from a research-based internet towards a more commercialized one.

The Internet Comes Home

The mid 90s saw home computers become a relatively common sight. Dial-up modems allowed people to connect to the internet via their home phone line, allowing access to the rapidly expanding web. Speeds were slow, and if someone called, you’d be kicked offline, but this was revolutionary.

People were starting to visit static websites, browsing for information and products, with the first mass-market examples of e-commerce being from this era. Big brands that we know today, like Amazon and eBay, launched in 1995 and still dominate the e-commerce market- Amazon alone having brought in 2.3Billion views last month.

People were starting to visit static websites, browsing for information and products, with the first mass-market examples of e-commerce being from this era. Big brands that we know today, like Amazon and eBay, launched in 1995 and still dominate the e-commerce market- Amazon alone having brought in 2.3Billion views last month.

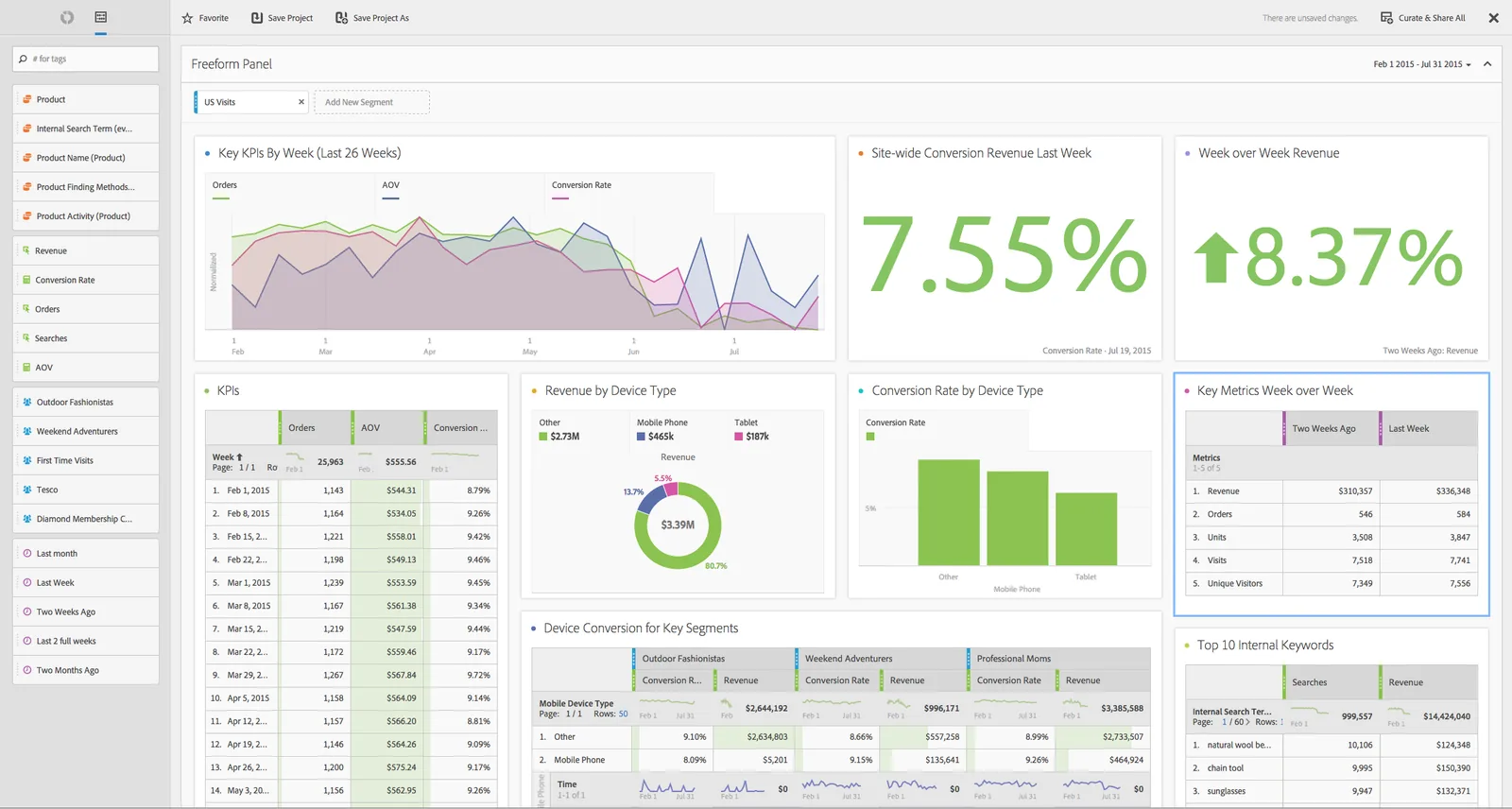

The Birth of Analytics

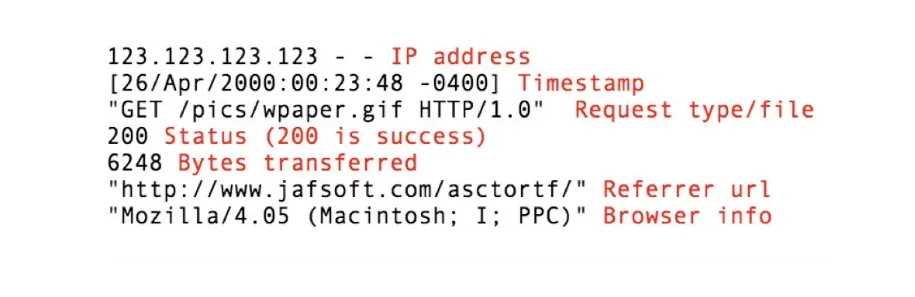

As well as the proliferation of new sites and new companies, the technology behind the internet continued to evolve. Analytics grew from manually checking your server logs to see how much traffic you had attracted to more complex, nuanced tools, providing deeper metrics and, crucially, differentiating between bots and human traffic.

The first of these programs was called Analog and launched in 1995. This automated the process of retrieving server logs and presented the information in an easily read format rather than code. It was now possible for anyone with a website to easily check how much traffic they were getting- digital marketing was born.

The next year, Web-Counter, which tracked visits, Accrue HitList, which specialized in monitoring downloads, and Omniture SiteCatalyst (now owned by Adobe and called Adobe Analytics), which got even more detailed, entered the market.

With these new tools, marketers could analyse what worked, what users were doing on websites, and create pages that brought in even more traffic.

The nature of what was measured also changed. In the early days of the internet, the metric was MB of data, with humans, bots and crawlers all being counted the same. As analytics advanced, the metric shifted to count individual human visitors. Today, this is what we pay attention to: each human visitor is a potential customer.

Hit counters became a common sight on web pages, displaying the number of visitors that they had attracted as trophies.

Traffic was now Big Business.

The first of these programs was called Analog and launched in 1995. This automated the process of retrieving server logs and presented the information in an easily read format rather than code. It was now possible for anyone with a website to easily check how much traffic they were getting- digital marketing was born.

The next year, Web-Counter, which tracked visits, Accrue HitList, which specialized in monitoring downloads, and Omniture SiteCatalyst (now owned by Adobe and called Adobe Analytics), which got even more detailed, entered the market.

With these new tools, marketers could analyse what worked, what users were doing on websites, and create pages that brought in even more traffic.

The nature of what was measured also changed. In the early days of the internet, the metric was MB of data, with humans, bots and crawlers all being counted the same. As analytics advanced, the metric shifted to count individual human visitors. Today, this is what we pay attention to: each human visitor is a potential customer.

Hit counters became a common sight on web pages, displaying the number of visitors that they had attracted as trophies.

Traffic was now Big Business.

Peer-To-Peer Sharing

The later part of this era of the internet saw the rise of peer-to-peer sharing apps like Napster, Kazaa and Limewire. These were used to share files (often illegally) between users. It’s estimated that by 2002, around 60% of all domestic internet traffic was through these services as people pirated content and shared it around.

This presented two problems regarding traffic (beyond all the legal cases that arose during this period): the ISPs of the time struggled to cope with the increase in traffic and the spread of Malware rapidly increased.

This presented two problems regarding traffic (beyond all the legal cases that arose during this period): the ISPs of the time struggled to cope with the increase in traffic and the spread of Malware rapidly increased.

The Rise of the Bots

During the dot-com boom period, new bots were developed and unleashed on the internet. Where previous generations had been designed to help users in various ways, these new ones were malicious. Malware had been theorised in earlier generations, but with more people now online, it was only a matter of time before they were realised.

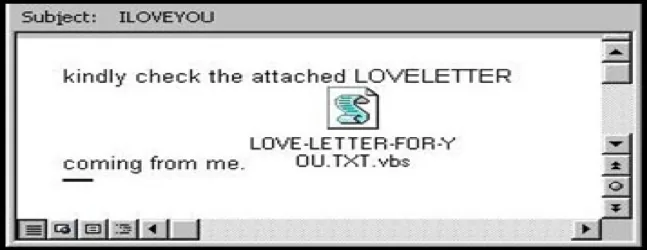

Trojans, worms and other malware quickly propagated through the rapidly expanding internet. The first major security threat from a dedicated piece of Malware was the ILOVEYOU virus, which infected over 10 million Windows systems around the world.

This bot infected a PC under the guise of a text file. It would then proceed to delete files and send itself to every contact the user had. New laws to protect systems and their users were quickly developed.

Trojans, worms and other malware quickly propagated through the rapidly expanding internet. The first major security threat from a dedicated piece of Malware was the ILOVEYOU virus, which infected over 10 million Windows systems around the world.

This bot infected a PC under the guise of a text file. It would then proceed to delete files and send itself to every contact the user had. New laws to protect systems and their users were quickly developed.

Broadband Is Born

As the internet grew and grew through the boom years of the late 90s, customers demanded more speed (and to stop being kicked off their games when their parents wanted to use the phone).

In 2000, Broadband was launched.

Previously, home internet connections were slow and could not be used when the phone line was engaged (causing endless arguments about whose turn it was to use the line). 56k dial-up modems were the standard, limiting the amount of traffic significantly.

Broadband split the signal, allowing phone lines to be used for both internet and voice traffic at the same time. It was marketed as “always on internet” and gave users much faster speeds and less disruption to their services.

This changed how websites were designed and, in turn, how traffic flowed around the internet. Images became much less burdensome, videos became longer, and sites more complex. People spent more and more time online, generating more and more traffic.

In 2000, Broadband was launched.

Previously, home internet connections were slow and could not be used when the phone line was engaged (causing endless arguments about whose turn it was to use the line). 56k dial-up modems were the standard, limiting the amount of traffic significantly.

Broadband split the signal, allowing phone lines to be used for both internet and voice traffic at the same time. It was marketed as “always on internet” and gave users much faster speeds and less disruption to their services.

This changed how websites were designed and, in turn, how traffic flowed around the internet. Images became much less burdensome, videos became longer, and sites more complex. People spent more and more time online, generating more and more traffic.

Getting Your Share: The Real Birth of SEO

Alongside this second boom in usage, marketers refined their craft, developing things like Search Engine Optimization in the way we think of it today.

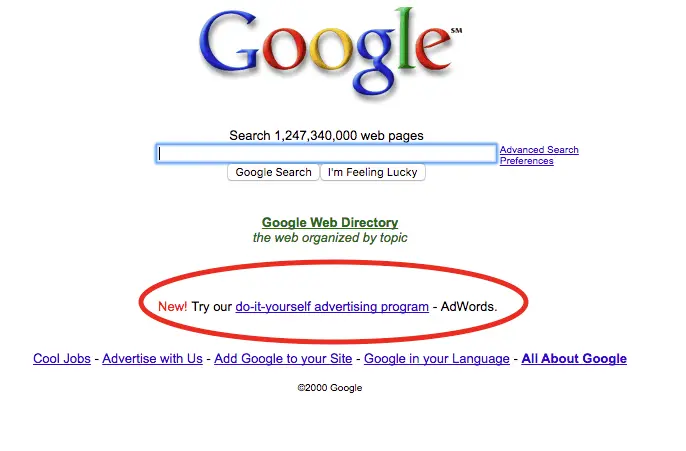

In 1998, Google, which today accounts for about 80% of global search traffic, launched their search engine based on an entirely new concept: ranking pages by importance and relevance.It was no longer enough to stuff keywords into metadata- content had to actually be crafted to bring in traffic.

Competition was fierce, and practices were often shady, but the amount of traffic that could be brought in was growing exponentially. In 1999, Google brought in around a billion searches. By the following year, this had exploded to 14 billion.

This new way of doing things quickly changed how people navigated the internet.

With more relevant search results, other search engines like Yahoo! quickly partnered with Google, displaying their results, while others changed their systems to match. Search engine optimization was now the major driver of traffic to a given website.

In 1998, Google, which today accounts for about 80% of global search traffic, launched their search engine based on an entirely new concept: ranking pages by importance and relevance.It was no longer enough to stuff keywords into metadata- content had to actually be crafted to bring in traffic.

Competition was fierce, and practices were often shady, but the amount of traffic that could be brought in was growing exponentially. In 1999, Google brought in around a billion searches. By the following year, this had exploded to 14 billion.

This new way of doing things quickly changed how people navigated the internet.

With more relevant search results, other search engines like Yahoo! quickly partnered with Google, displaying their results, while others changed their systems to match. Search engine optimization was now the major driver of traffic to a given website.

Search Advertising

In 2000, Google launched AdWords, competing with Pay-Per-Click companies like Overture. In-search advertising, offering a pay-per-impression model to start with, fundamentally changed how companies could be seen.

There were now 3 separate ways of bringing in mass traffic- SEO, display ads and search ads.

There were now 3 separate ways of bringing in mass traffic- SEO, display ads and search ads.

The Bubble Pops

The dot-com boom of the late 90s was not to last. By March 2000, the wave of tech investment had reached its peak.

By 2002, investment had fallen by 78%, and what had promised to be a new age of prosperity proved to be, as they so often do, a bubble. The NASDAQ fell from a high of 5,048 to 1,139- all but wiping out the exponential growth of the previous years.

Many digital companies shut down as the value of their shares plummeted.

However, people had become used to having the internet in their homes, and while big names like Pets.com vanished overnight, thanks to their stock being wiped out, traffic continued to grow.

The fall of these early giants cleared space for a new version of the Internet: Web 2.0

By 2002, investment had fallen by 78%, and what had promised to be a new age of prosperity proved to be, as they so often do, a bubble. The NASDAQ fell from a high of 5,048 to 1,139- all but wiping out the exponential growth of the previous years.

Many digital companies shut down as the value of their shares plummeted.

However, people had become used to having the internet in their homes, and while big names like Pets.com vanished overnight, thanks to their stock being wiped out, traffic continued to grow.

The fall of these early giants cleared space for a new version of the Internet: Web 2.0

Web 2.0 2002-2007

Total Traffic: 2002: 405PB per month - Estimates vary between 587-631 Million users2007: 6403PB per month - around 1.3 Billion users

During the early days of the internet, websites were generally fairly static. Someone would create an HTML site, and it would sit there, generating traffic.

The Dot-Com boom saw sites getting more regular updates, but fundamentally, it was much the same.

With the crash, this changed.

A new generation of web-users rose, creating their own content. Early forms of social media, like MySpace and personal blogs, created a new, collaborative internet where users were creators as much as traffic. This was called Web 2.0.

The Dot-Com boom saw sites getting more regular updates, but fundamentally, it was much the same.

With the crash, this changed.

A new generation of web-users rose, creating their own content. Early forms of social media, like MySpace and personal blogs, created a new, collaborative internet where users were creators as much as traffic. This was called Web 2.0.

Collaborative Internet

The Web 2.0 phenomenon started around 1999, with sites like Blogger and LiveJournal. These allowed people to easily post their own content online and generate traffic of their own without any technical knowledge.

Over time, these basic semi-static sites evolved into early versions of social media, and a lot of the platforms that we spend our days scrolling through today.

As the nature of traffic shifted from viewing static pages to curating and creating content of our own, people became ever more engaged, spending more and more of their time creating and browsing. This trend continues today, with people spending nearly 7 hours online each day on average.

People started viewing a wider variety of pages and types of content, with blogs, videos, and social media all seeing massive spikes in traffic.

Today, most of the largest players in terms of internet traffic are based on Web 2.0 principles. Facebook alone currently commands over 2.11 billion daily users.

Over time, these basic semi-static sites evolved into early versions of social media, and a lot of the platforms that we spend our days scrolling through today.

As the nature of traffic shifted from viewing static pages to curating and creating content of our own, people became ever more engaged, spending more and more of their time creating and browsing. This trend continues today, with people spending nearly 7 hours online each day on average.

People started viewing a wider variety of pages and types of content, with blogs, videos, and social media all seeing massive spikes in traffic.

Today, most of the largest players in terms of internet traffic are based on Web 2.0 principles. Facebook alone currently commands over 2.11 billion daily users.

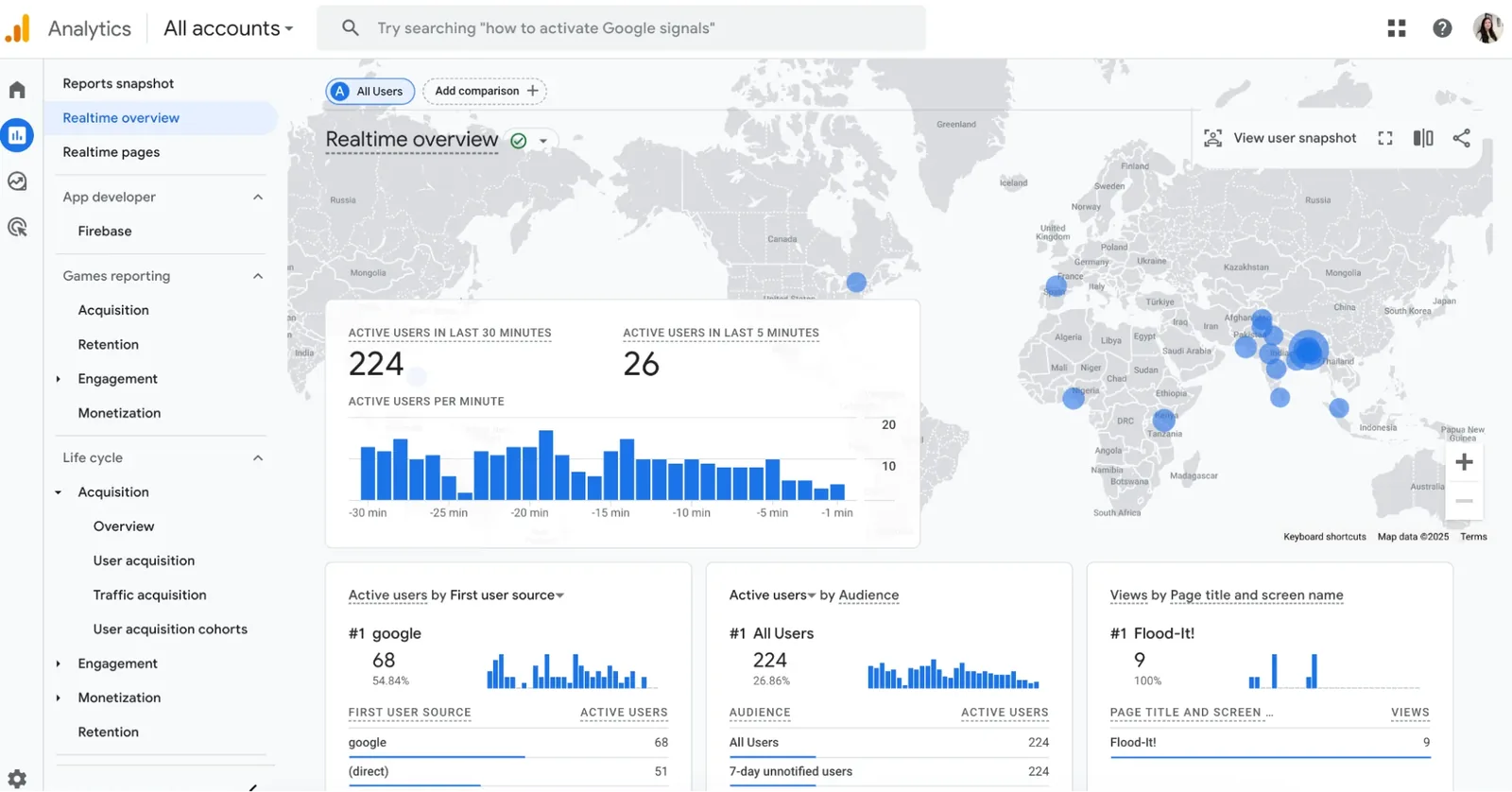

Google Analytics

The way we measured traffic changed during this period, with Google Analytics becoming the favoured tool for website owners and marketers alike. Launching in 2005 after Google acquired Urchin Software Corp, this represented a huge step-change from the old traffic counters.

With far more granular information about visitors and their behaviour on your site, collected via JavaScript, Google Urchin allowed companies to map their users’ journey and develop content to maximise their returns by manipulating traffic flows from search results ever more accurately.

With far more granular information about visitors and their behaviour on your site, collected via JavaScript, Google Urchin allowed companies to map their users’ journey and develop content to maximise their returns by manipulating traffic flows from search results ever more accurately.

The Mobile Revolution 2007-2016

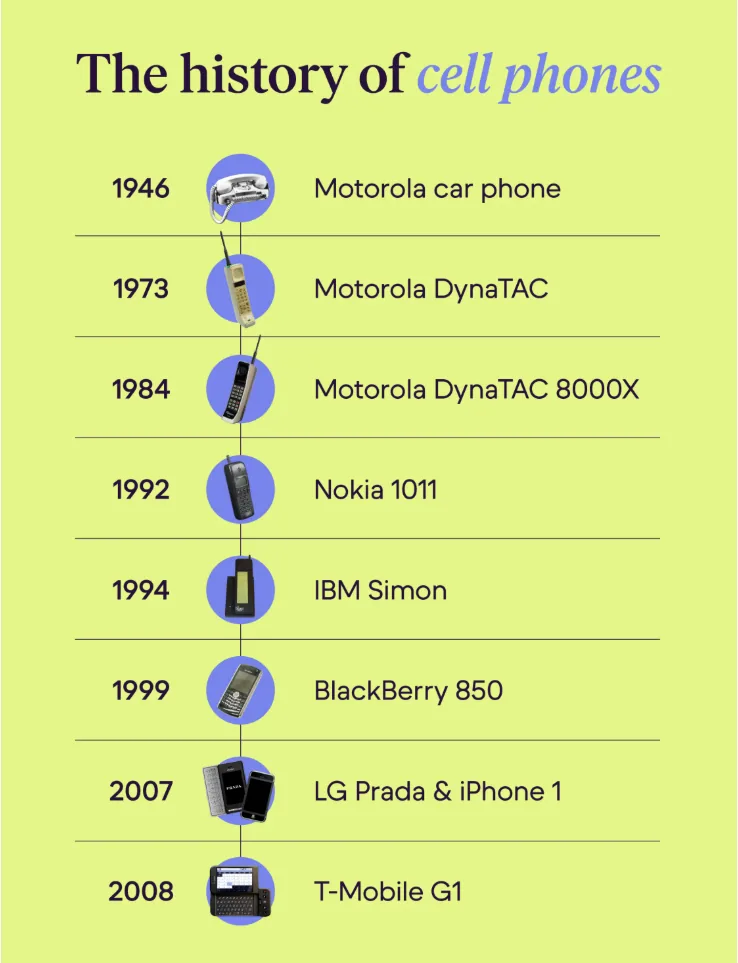

The next major milestone in how we use the internet came with the creation of smartphones. This actually happened much earlier than you would think- the IBM Simon Personal Computer is to all intents and purposes the first, released in 1994- but it wasn’t until 1999 that something really resembling modern smartphones was released.

The BlackBerry 850 was the first phone that allowed users to check their emails on the go, marking the start of a shift in how traffic was directed around the internet. Various iterations from BlackBerry popped up, and even the fashion brand Prada got in on the action.

In 2007, Apple launched the first iPhone, and HTC released the first Android device. The nature of web traffic really started to change, moving from PC-browser-based traffic to mobile ecosystems of apps and cloud-based computing.

The BlackBerry 850 was the first phone that allowed users to check their emails on the go, marking the start of a shift in how traffic was directed around the internet. Various iterations from BlackBerry popped up, and even the fashion brand Prada got in on the action.

In 2007, Apple launched the first iPhone, and HTC released the first Android device. The nature of web traffic really started to change, moving from PC-browser-based traffic to mobile ecosystems of apps and cloud-based computing.

Mobile Vs Desktop

Now that the internet was fully mobile, the sheer amount of traffic increased hugely: over 53% in a single year.

People were no longer attached to their desks, and they could browse on the train, bus or while socialising. 20% of the global population were now regular users, with 6,430 Petabytes of traffic being generated each month.

Now, traffic was split between mobile devices and traditional computers. Quickly, browsing on your phone or tablet overtook using a traditional home computer or laptop, with the amount of traffic on these devices becoming the largest share by 2016.

Apps took up an increasingly large proportion of people’s time online (currently standing at around 70% of total usage).

Website designs changed to optimize for this new traffic, becoming easier to navigate by touch, and cleaner, more modern designs started to dominate, drawing in traffic through ease of use. Responsive design, sites that change their sizing and layout to suit the device they’re being viewed on, became the norm.

Now, traffic was split between mobile devices and traditional computers. Quickly, browsing on your phone or tablet overtook using a traditional home computer or laptop, with the amount of traffic on these devices becoming the largest share by 2016.

Apps took up an increasingly large proportion of people’s time online (currently standing at around 70% of total usage).

Website designs changed to optimize for this new traffic, becoming easier to navigate by touch, and cleaner, more modern designs started to dominate, drawing in traffic through ease of use. Responsive design, sites that change their sizing and layout to suit the device they’re being viewed on, became the norm.

New Technologies

Alongside this shift in where traffic was coming from, new technologies arose to enhance user experiences online.

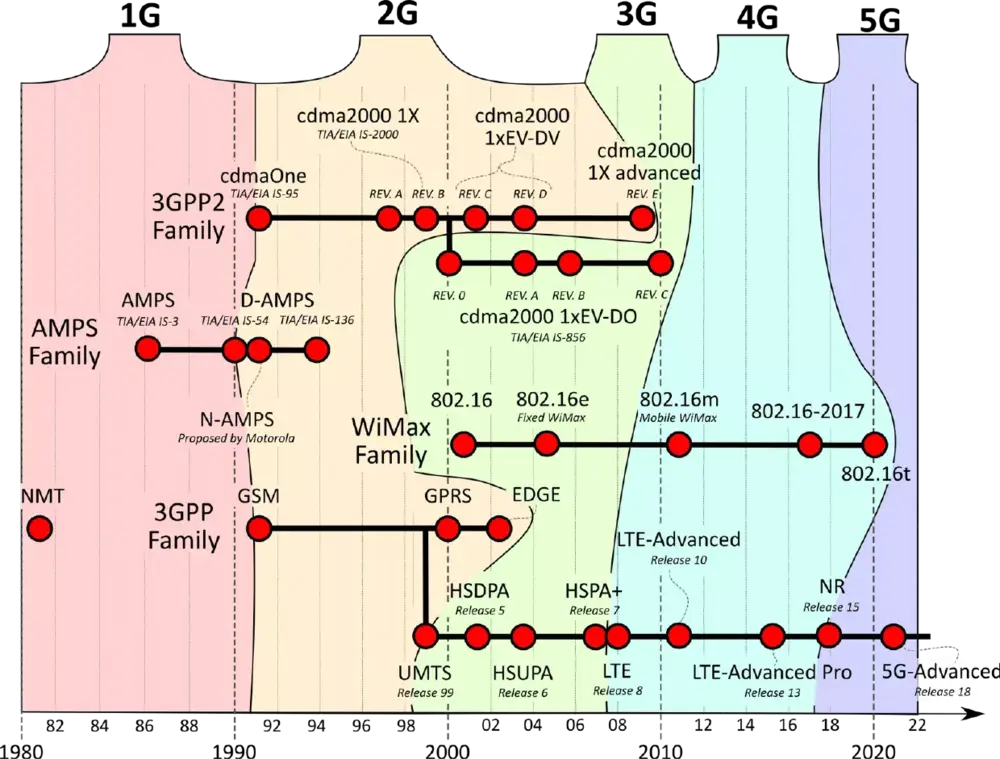

The upgrade of 3G to 4G made access on the go relatively pain-free, increasing traffic for both mobile browsing and dedicated apps hugely. With quicker loading times, mobile users started to consume twice as much data.

Other technological advances, like cloud computing, which delivers remote computing resources like storage, servers, and software, shifted even more traffic online as programs that were once directly installed on a user’s PC moved to the internet.

Though the foundations of the cloud go back a long way (J.C.R Licklider proposed an “intergalactic computer network” in the 1960s), it was not until Amazon’s EC2 launched in 2006 that it became a reality for consumers.

As mobile traffic started to take over from traditional computing, cloud-based services, especially around the storage of photos, videos, and screenshots, became a bigger and bigger part of daily internet usage.

The upgrade of 3G to 4G made access on the go relatively pain-free, increasing traffic for both mobile browsing and dedicated apps hugely. With quicker loading times, mobile users started to consume twice as much data.

Other technological advances, like cloud computing, which delivers remote computing resources like storage, servers, and software, shifted even more traffic online as programs that were once directly installed on a user’s PC moved to the internet.

Though the foundations of the cloud go back a long way (J.C.R Licklider proposed an “intergalactic computer network” in the 1960s), it was not until Amazon’s EC2 launched in 2006 that it became a reality for consumers.

As mobile traffic started to take over from traditional computing, cloud-based services, especially around the storage of photos, videos, and screenshots, became a bigger and bigger part of daily internet usage.

BotNets And Malware Develop Further

In 2007, the Storm BotNet infected around 50 million PCs, committing crimes from identity fraud to stock price manipulation. This botnet transformed infected machines into ‘zombies’, carrying out its nefarious deeds.

This is just the best known of these networks, with others being behind the rise of spam emails- Cutwail sent out 74 billion messages in a single day- and various other unpleasant online activities.

These networks of infected machines increased traffic across the internet but caused untold damage in the process.

This is just the best known of these networks, with others being behind the rise of spam emails- Cutwail sent out 74 billion messages in a single day- and various other unpleasant online activities.

These networks of infected machines increased traffic across the internet but caused untold damage in the process.

The Modern Internet: 2016-2026

Total Traffic: 2016- 1.2ZB per year

2026: 602.1EB per month (7.2ZB per year if there was no growth)- Over 6 Billion users as of March.

2026: 602.1EB per month (7.2ZB per year if there was no growth)- Over 6 Billion users as of March.

Today, the internet is absolutely ubiquitous. Everything from phones to lightbulbs can be connected and controlled remotely via an app, with every interaction representing traffic of a sort.

When we have a question, the first place we turn is to online services like Wikipedia, Google or ChatGPT. When we want to learn how to do something, from speaking a new language to fixing furniture, we search Youtube for a tutorial or turn to an app.

When we want to buy something, Amazon is often the first point of call. When we want to relax after a hard day browsing, we turn to streaming services like Netflix to unwind. In fact, streaming is now so common that many countries are considering turning off the traditional TV broadcast signals altogether.

When we have a question, the first place we turn is to online services like Wikipedia, Google or ChatGPT. When we want to learn how to do something, from speaking a new language to fixing furniture, we search Youtube for a tutorial or turn to an app.

When we want to buy something, Amazon is often the first point of call. When we want to relax after a hard day browsing, we turn to streaming services like Netflix to unwind. In fact, streaming is now so common that many countries are considering turning off the traditional TV broadcast signals altogether.

Exponential Growth

This represents a mind-boggling amount of traffic. Estimates say that 402.74TB of entirely new data hits the internet every single day, and people are eating it up, driving a huge spike in traffic.

In the UK alone, users generate 2,450,000TB of traffic each day- equating to about 376GB per head of population. As access costs come down and infrastructure improves, more and more people around the world are joining in.

Traffic in the modern era is driven largely by media: around 82% of all web traffic goes to video, audio or software as of early 2026, while communication (both direct messaging and social media) and e-commerce make up most of the rest.

YouTube generates around 8.4 billion visits each month- more than the total population of the planet. A single, annoyingly catchy, music video hosted there has now be watched over 5.8 billion times, representing more than 46,350 years of collective viewing time.

In the UK alone, users generate 2,450,000TB of traffic each day- equating to about 376GB per head of population. As access costs come down and infrastructure improves, more and more people around the world are joining in.

Traffic in the modern era is driven largely by media: around 82% of all web traffic goes to video, audio or software as of early 2026, while communication (both direct messaging and social media) and e-commerce make up most of the rest.

YouTube generates around 8.4 billion visits each month- more than the total population of the planet. A single, annoyingly catchy, music video hosted there has now be watched over 5.8 billion times, representing more than 46,350 years of collective viewing time.

The 2020s: Pandemic And Network Upgrades

Shortly before the pandemic hit, the first places started to roll out 5G infrastructure.

Starting in South Korea- one of the world’s most connected countries- and quickly spreading through the US, Europe and China, it promised to revolutionise the now utterly dominant mobile network.

With greater speeds and bandwidth, this has been a major contributing factor to the continuous growth in daily internet traffic as more and more places bring it online.

While the trend has been upwards since the invention of the internet, traffic saw a particularly huge spike during the COVID lockdowns. Within a few days of the first lockdowns being announced, traffic grew 20% as people looked ever more online for connection to the outside world.

This upward growth did die back slightly, but it never quite returned to pre-pandemic levels. Cultural shifts towards working from home- evidenced by the fact that there were are 4 times as many job adverts offering home-based positions in 2023 as there were in 2019- seem here to stay.

Each of these positions pushes the total traffic up a little more each day.

Starting in South Korea- one of the world’s most connected countries- and quickly spreading through the US, Europe and China, it promised to revolutionise the now utterly dominant mobile network.

With greater speeds and bandwidth, this has been a major contributing factor to the continuous growth in daily internet traffic as more and more places bring it online.

While the trend has been upwards since the invention of the internet, traffic saw a particularly huge spike during the COVID lockdowns. Within a few days of the first lockdowns being announced, traffic grew 20% as people looked ever more online for connection to the outside world.

This upward growth did die back slightly, but it never quite returned to pre-pandemic levels. Cultural shifts towards working from home- evidenced by the fact that there were are 4 times as many job adverts offering home-based positions in 2023 as there were in 2019- seem here to stay.

Each of these positions pushes the total traffic up a little more each day.

New Demands

With the proliferation of online services, ultra-high definition streaming and working from home, the infrastructure of the internet has had to adapt to meet demand.

While traditionally websites would be stored on a single, remote server, this can now represent a bottleneck, slowing loading times and driving traffic away towards a service’s competitors. To counteract these demands, many high-intensity services now use Content Delivery Networks (CDNs).

These networks bring content closer to the user, providing cached versions of the content and reducing the amount of bandwidth each access takes up, and hugely increasing loading times.

Removing these pain points for users and companies alike has, again, driven us ever more online, particularly for our entertainment needs.

While traditionally websites would be stored on a single, remote server, this can now represent a bottleneck, slowing loading times and driving traffic away towards a service’s competitors. To counteract these demands, many high-intensity services now use Content Delivery Networks (CDNs).

These networks bring content closer to the user, providing cached versions of the content and reducing the amount of bandwidth each access takes up, and hugely increasing loading times.

Removing these pain points for users and companies alike has, again, driven us ever more online, particularly for our entertainment needs.

The Rise of AI

With the birth of generative AI in 2022, the amount of traffic passing through the internet rose again. Not only are the bots drawing in and creating traffic of their own, they’re changing how existing traffic navigates the internet.

Around 1% of all traffic (which sounds small, but if you’ve read this blog, you’ll understand that that is a mind-bending number) comes directly from a conversation with a chatbot. Each month this number rises.

While it’s impossible to put a figure on exactly how many clicks come indirectly from these conversations leading to a traditional search, it’s likely to be an increasingly large number.

While this is still relatively new tech that we’re just starting to adapt to, the future for the total amount of web traffic and how it’s directed around the internet hasn’t been this exciting since Mr Berners-Lee posted that first website all the way back in 1990.

Around 1% of all traffic (which sounds small, but if you’ve read this blog, you’ll understand that that is a mind-bending number) comes directly from a conversation with a chatbot. Each month this number rises.

While it’s impossible to put a figure on exactly how many clicks come indirectly from these conversations leading to a traditional search, it’s likely to be an increasingly large number.

While this is still relatively new tech that we’re just starting to adapt to, the future for the total amount of web traffic and how it’s directed around the internet hasn’t been this exciting since Mr Berners-Lee posted that first website all the way back in 1990.

AI and Bots

This new development has created new problems as well as new opportunities: AI chatbots can help users generate text, videos and images, offer recommendations and other useful services, but can also be turned towards causing harm.

AI is now being used to influence decision-making, produce and post misinformation and suffers from bias thanks to incomplete information and bots are spreading it through social media. It has also driven a huge spike in terms of cybersecurity threats, breaking encryptions and posing problems for companies, individuals and countries alike.

While it is a revolutionary tool, creating a whole new flow of web traffic, we’re still coming to terms with both its positive and negative aspects.

AI is now being used to influence decision-making, produce and post misinformation and suffers from bias thanks to incomplete information and bots are spreading it through social media. It has also driven a huge spike in terms of cybersecurity threats, breaking encryptions and posing problems for companies, individuals and countries alike.

While it is a revolutionary tool, creating a whole new flow of web traffic, we’re still coming to terms with both its positive and negative aspects.

The Future: 2026 and onwards

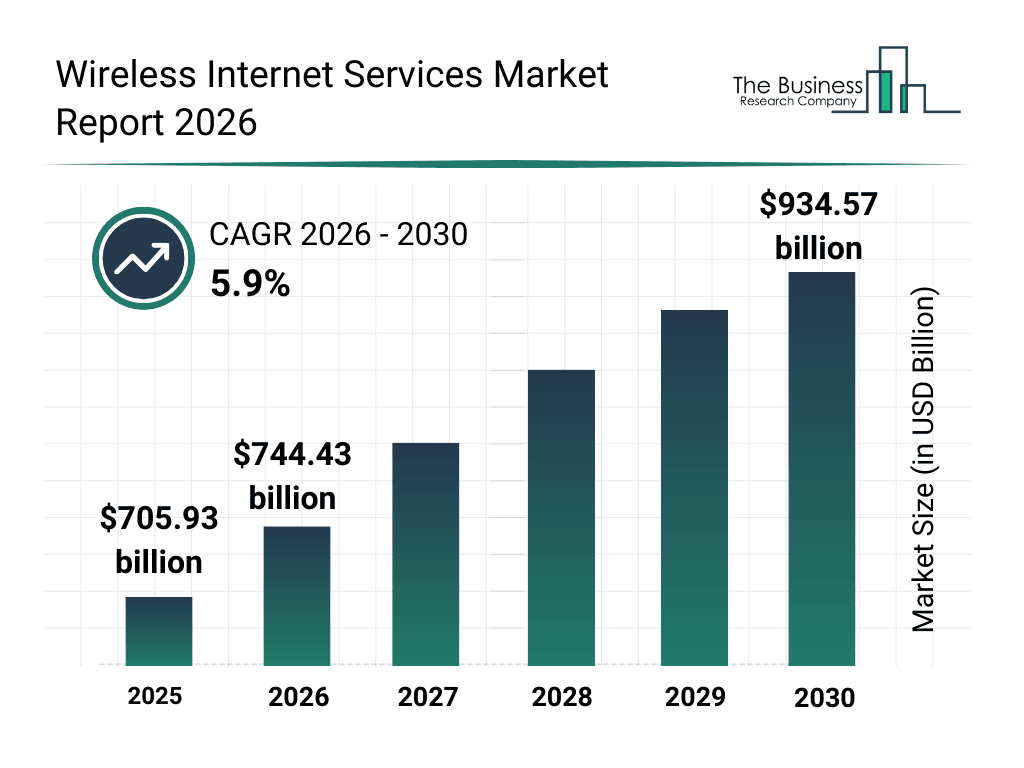

Now that we know where traffic comes from today and how we got here, the next question is what the future of web traffic will look like. We can get a pretty good idea of the direction things are moving in from looking at what is in development now:

The Future of Mobile Tech

6G is already well on the way to being fully developed- even before 5G has finished rolling out everywhere. Promising even greater speeds, better bandwidth and AI optimization of the network, the aim is for peak download speeds of over 100GPs; potentially 1000 times faster than 5G.

In human terms, this makes most modern downloads effectively instantaneous. In terms of machine-to-machine communications, this could be absolutely revolutionary. This is particularly exciting when you consider the possibility of unlimited communication: land, air, sea and even space connectivity becomes relatively simple.

When we consider how much traffic was generated at the invention of 4G and then again when 5G launched, the potential increase is absolutely huge.

In human terms, this makes most modern downloads effectively instantaneous. In terms of machine-to-machine communications, this could be absolutely revolutionary. This is particularly exciting when you consider the possibility of unlimited communication: land, air, sea and even space connectivity becomes relatively simple.

When we consider how much traffic was generated at the invention of 4G and then again when 5G launched, the potential increase is absolutely huge.

Remote Access

Companies like Starlink and their Chinese competitors already serve some of the world’s most remote communities. Making use of a network of satellites, they beam high-speed, low-latency internet signals, providing good quality connections to those who would otherwise be at the mercy of old infrastructure or totally cut off from the internet.

With plans for global coverage quickly coming to fruition, a new connectivity revolution is coming. This could bring affordable internet access to millions of people globally, opening up entirely new industries and markets around the world and significantly boosting traffic flows.

This technology is already being rolled out on transport networks, including ships and planes, allowing people to browse as they travel far more reliably.

With plans for global coverage quickly coming to fruition, a new connectivity revolution is coming. This could bring affordable internet access to millions of people globally, opening up entirely new industries and markets around the world and significantly boosting traffic flows.

This technology is already being rolled out on transport networks, including ships and planes, allowing people to browse as they travel far more reliably.

The Dead Internet: The Bots Finally Win?

With the increase in AI activity, proponents of the Dead Internet Theory are feeling pretty justified.

This theory says that eventually, bots will form the vast majority of internet traffic, and the content will be generally automatically generated and algorithmically manipulated.

As it stands, estimates suggest that around 20% of social media posts are generated by bots, rising to around 40% during times such as elections.

These numbers seem set to increase as AI gets better, casting doubt on the reliability of online sources of information and discussion, especially considering that current estimates say 65% of these bots are spreading misinformation.

As this phenomenon becomes better known, it wouldn’t be unreasonable to expect the nature of traffic to shift dramatically in response.

Web 2.0 services like social media may start haemorrhaging users as people move to more reliable sources of information.

If the likes of Facebook and X don’t find ways around this problem, the future of human internet traffic might shift back towards a Web 1.0 model or may evolve into something entirely new.

This theory says that eventually, bots will form the vast majority of internet traffic, and the content will be generally automatically generated and algorithmically manipulated.

As it stands, estimates suggest that around 20% of social media posts are generated by bots, rising to around 40% during times such as elections.

These numbers seem set to increase as AI gets better, casting doubt on the reliability of online sources of information and discussion, especially considering that current estimates say 65% of these bots are spreading misinformation.

As this phenomenon becomes better known, it wouldn’t be unreasonable to expect the nature of traffic to shift dramatically in response.

Web 2.0 services like social media may start haemorrhaging users as people move to more reliable sources of information.

If the likes of Facebook and X don’t find ways around this problem, the future of human internet traffic might shift back towards a Web 1.0 model or may evolve into something entirely new.

Final Thoughts: Exponential Growth and Evolution

From humble Cold War beginnings of just a few high-level research facilities, through the commercialization of the Dot-Com boom and the rebirth and reinvention of the internet that we’re currently living through, web traffic has never stopped growing.

While it may have started as a few researchers sharing information, today, web traffic is the backbone of the global economy. Every day, the numbers continue to tick upwards.

Every business, creator or even social media poster has to take notice of how this traffic flows. Where once we were satisfied to browse static HTML sites, now we demand a creative stake. Where text was once enough, now traffic is driven by video content.

Who knows where the traffic will go tomorrow?

While it may have started as a few researchers sharing information, today, web traffic is the backbone of the global economy. Every day, the numbers continue to tick upwards.

Every business, creator or even social media poster has to take notice of how this traffic flows. Where once we were satisfied to browse static HTML sites, now we demand a creative stake. Where text was once enough, now traffic is driven by video content.

Who knows where the traffic will go tomorrow?

.webp)